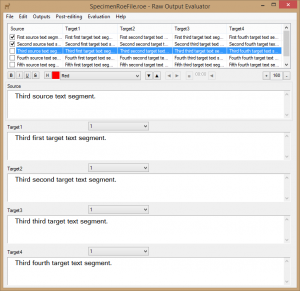

Raw Output Evaluator

Raw Output Evaluator is a tool designed to allow students, researchers and industry practitioners to compare the raw outputs from different machine translation engines, both to each other and to other translations of the same source text, and carry out comparative human quality assessment using standard industry metrics. The same program can also be used as a simple post-editing tool and to compare the time required to post-edit MT output with how long it takes to produce an unaided human translation thanks to a built-in timer.

It was first developed for a postgraduate course module specifically aimed at teaching the use of machine translation and post-editing, designed as part of the Master’s Degree in Specialist Translation and Conference Interpreting at the International University of Languages and Media (IULM), Milan, Italy.

It was presented to the public for the first time during a workshop at the 40th edition of the annual Translating and the Computer Conference in London in November 2018. A full paper was published in the proceedings.

To make using the tool as easy as possible, extensive on-line help is available. For new features, see ChangeLog.